Automating the continuous integration and delivery process

Many organisations are reaping the benefits of increased agility and iterative speed of adopting DevOps tools and techniques in both their software engineering practices and non-software development areas, such as infrastructure management. Let’s look at why the DevOps process is so important for organisations, exploring our own continuous integration and continuous delivery / deployment (CI/CD) journey since 2012.

Using DevOps for software development and infrastructure

DevOps is considered the new way that IT services are deployed, making the older Waterfall style processes for design, test, and deployment somewhat redundant.

DevOps allows businesses to adopt pipeline-based delivery of their services or products, applied whether it is an infrastructure delivery or software engineering focused product delivery. Continuous integration and continuous delivery (CI/CD) is now making waves across the IT industry, with major impacts to productivity and deployment agility in the infrastructure space, an area that has always, historically used a different approach to managing iterations and releases. Now, we see infrastructure teams adopt automation, integration and software-defined configurations for networking, operating systems, and application deployment, such that the end-to-end service stack falls completely under the new DevOps paradigm.

This groundswell of interest in DevOps has resulted in a multitude of software tools to support CI/CD pipelines, equally applicable to infrastructure management as they are software development, testing and deployment.

Catalyst began designing our CI process back in 2012. Since then, we haven’t looked back. Using our current platform, GoCD, we’ve triggered 132,497 pipelines across 414,082 unique pipeline stages, and run a whopping 50,239 deployments into multiple environments: staging, user acceptance testing (UAT) and production.

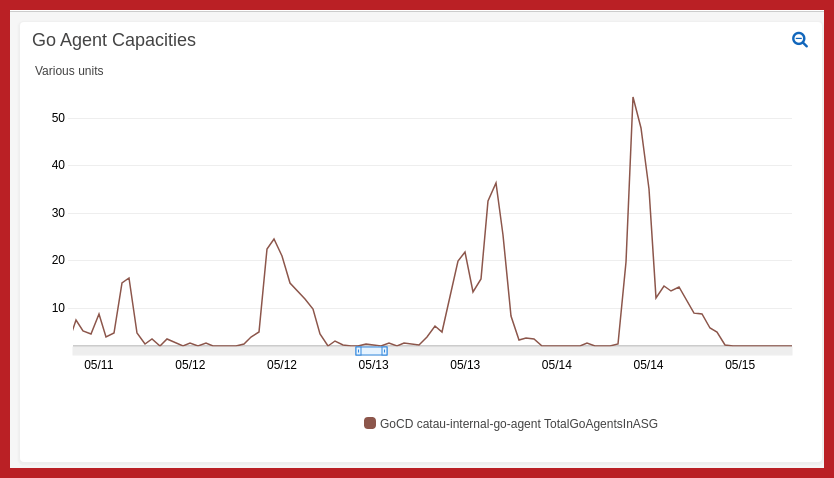

Cost effective scaling when you need it

A great feature of our automation pipelines is that we auto scale build agents. This allows us to automatically modify the build agent to the needs of the deployment, based on information fed in at the build stage.

This level of flexibility makes deployments fast and incredibly cost effective. All in, Catalyst has integrated 14,736 different build agents into our deployments, with each of these pipelines helping to streamline our service delivery and improve and stabilise the build state to make the security of our final production services more resilient to build-level errors; something to which manual deployments are particularly prone.

Making pipelines more consistent

Software development projects have two major considerations that impact on their success or failure. The first is the external factors that sit outside the development process itself and includes things like the changing scope of development and its impact on costs. The second is the internal elements of the development process /pipeline itself.

When managing the software build, test and deployment elements of a development project manually it can introduce bugs and errors across the development lifecycle. Why? Because people are human.

Manual steps can make error identification incredibly hard, especially where the issues manifest as product issues (even if they are simple build errors). Bugs like these can take days to fix, which can seriously impact the number of new features or enhancements you can deploy in your next iteration.

Systematic processes

By introducing automation into your process, you introduce repeatability, a systematic process that executes the same steps in the same way, every time.

The use of DevOps allows teams to shortcut the iteration process, so that issues can be addressed and updates released quickly. This leaves teams time to focus on more valuable activities like user story development and performance analysis.

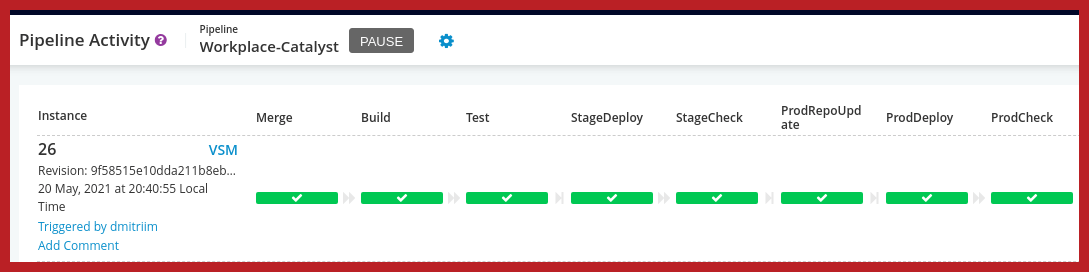

Automation with GoCD

With a wealth of experience in DevOps and the CI/CD process, the team at Catalyst uses GoCD across all our pipelines.

A tried and tested deployment process

Our end-to-end deployment process includes:

Merge

◦ Merge from upstream for security;

◦ Merge upstream sub-modules for plugin updates.

Build

◦ Build a release artefact, usually a container, can also be a tarball or Debian package;

◦ Push artefact into a docker registry or Debian repository.

Test

◦ Run unit tests;

◦ Run Behat tests;

◦ Report on any failed tests in the relevant internal chat channel.

Deploy to staging

◦ Scheduled downtime;

◦ Test if upgrade required. If not, skip maintenance mode and allow the application to be updated online with no outage;

◦ Scheduled maintenance mode;

◦ Run the application upgrade scripts for any schema changes;

◦ AB cutover the webservers and scheduled tasks runners to the new code;

◦ Purge any external caching like Varnish or CloudFront;

◦ End maintenance mode;

◦ End downtime.

Post deployment smoke tests

◦ Active checks against the application to ensure it runs correctly.

Production – pre-release

◦ Confirm release artefact is available;

◦ Pre-warm the production infrastructure with the new image to speed up deployments and reduce downtime.

Production deployment

◦ Same process as staging with the same release artefact;

◦ Additional steps to create a database clone for fast rollback if required.

Post-production deployment smoke tests

◦ More active checks to ensure production is stable.

Post-production deployment clean-up

◦ Clean-u of the database clone once confirmed that rollback is not required.

Our team has integrated GoCD into every aspect of our deployment lifecycle. In fact, the 4,681 deployments where we have had GoCD deploy itself as part of the process, we’ve learned just how important this step of automation is in speeding up the delivery of new CI/CD features.

Environment updates

For infrastructure projects, GoCD has helped us undertake rapid environment updates, with automated refresh of capabilities. Complex processes, such as database maintenance, can all be automated. For example:

• Backup production database with pg_dump or mysqldump

• Restore the dump to an isolated location

• Run data sanitising scripts to reduce log size and anonymise data to remove personally identifiable information (reducing any privacy issues).

• Reconfigure settings for data stored in the database, such as SSO endpoints and integration endpoints

• Restore the database to a UAT or staging site

• Run a variety of automated tests and review the results

• Reconfigured UAT or staging environments to point to the new database

• Finally, clean up the old database and close the pipeline

Proven, trusted CI/CD Services

We love using GoCD, as it transforms our client delivery of building and deploying solutions. It has created so many options for the way we work; we’ve honed our methods over the years, to the point where we have patterns for almost every conceivable use case.